Digitalization includes moral dilemmas that we wouldn’t normally have without it. Making an ethical judgment is a decision-making process that involves collecting and evaluating information, considering and assessing the consequences of alternative choices. It is both a rational and an emotional process, and depends on an individual’s personal and social context and values. It is often not so black and white as is for instance the case of one of the most famous experiments – the self-driving car.

Sometimes the decisions are not about life and death but can still have far-reaching consequences. Banks have for years been using so-called credit score calculators when deciding about loans to citizens. Public institutions have increasingly started to rely on statistical profiling when deciding about granting certain social services. Companies rely more and more on automatic recruitment tools which are gender-biased. A most recent example is the debate around the Corona immunity pass – while some argue against it to avoid stigmatization and marginalization, others promote it for public health reasons. Some issues have been given partial legal certainty while many others are yet to be tackled.

In this blog I list three aspects that we should have in mind when addressing ethical issues in the digital world.

Digital divide

In a recent blog-post (in German only) I wrote about the digital divide between students who have access to technology and those who don’t. Such an assumingly simple precondition may decide about who will pass the semester exams and who will not. It is almost paradoxical given that digitalization has in fact expanded the possibility to access resources on a global scale. If knowledge could previously be obtained only through University or the local library, now we have the whole internet at our disposal. We can attend online classes (even for free) and obtain research data by a click. And this is just one example from an educational context.

As is the case with other, more traditional resources, the benefits of digitalization are not equally distributed. The speed of digital transformation makes it difficult to follow its advantages and address its disadvantages. It has been additionally accelerated by the extraordinary Corona-times. Thus, having access to digital resources may in the future become a matter of economic survival for companies, political prevalence for political actors, and a matter of social participation for citizens.

Recognizing such new types of inequalities becomes a moral imperative for (digital) societies. Which brings us to the next aspect:

Digital literacy

The ability to collect and evaluate information, consider alternatives and their consequences is at the core of the ethical decision-making process. The digital world has given us unprecedented access to information, however, the ability for its critical assessment is a matter of literacy. Thus, data literacy becomes an important competence in modern digital societies.

- Do I always use the same internet search machine and click on the first few matches? I think many of us do.

- Am I using digital tools despite their privacy concerns? Do I even know what these concerns are? I bet many of us just click away from the data protection statement because let’s face it, reading those long statements is a tedious task and something that only lawyers would understand (?!)

- Do I easily share and like posts? Can I distinguish the interests which lie behind a posting? Is it a fact, an advertisement or an opinion?

“Fake news” is said to spread more easily than “real news”. At least many of us here in Germany know what “fake news” means (68 percent), which is not to be said about algorithms (43 percent) or bots (22 percent).

So how are we to be able to make decisions when we don’t even know what the terms of new technologies stand for and what the effects of their use are? What about those cases when we are prevented from making the decision altogether as the data has already been processed and evaluated for us?

Artificial Intelligence in Decision-making

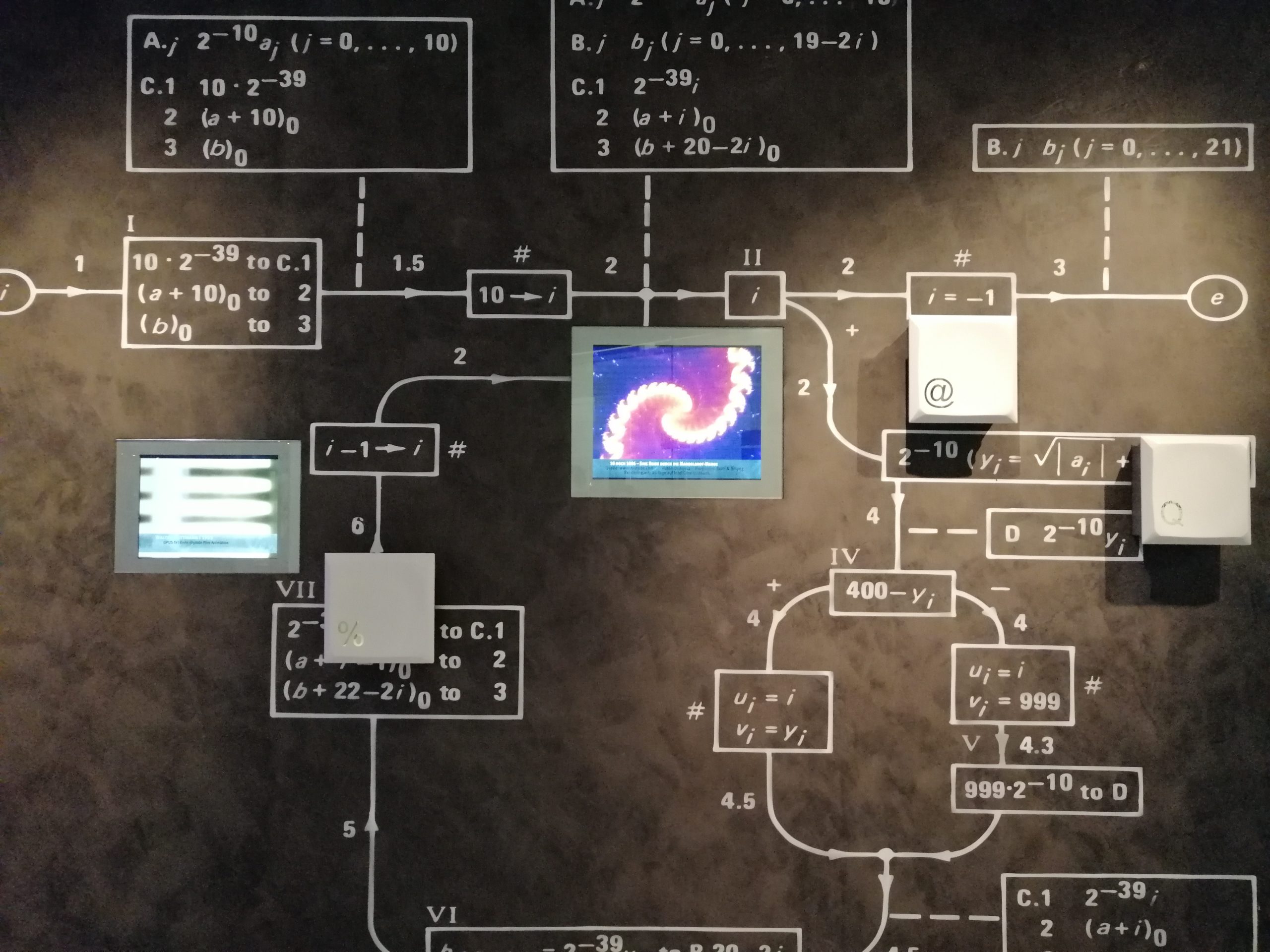

This is what happens when artificial intelligence is employed. Based on data and a set of algorithms a machine tries to make decisions as humans would do. The data is the information that is at disposal or fed into the machine. The algorithm is the process containing the steps to arrive at a certain conclusion. So there are at least two problems when relying on computer-based decisions and each of them could contain ethical issues:

- Data-related: How has the data been collected? Are there any privacy issues? Is the dataset neutral or does it give a distorted view of certain groups of people (based on their online footprint or gender, ethnic and other characteristics)?

- Algorithm-related: Do we know how the machine learns? What sort of (statistical) models is in place? What sort of online behaviors are thus promoted?

To answer these questions we need both transparency and expert knowledge – something which is in my view not soon achievable. These are not only complex processes but often labeled as company secrets. Consequently, society will need to find solutions to such ethical conflicts like advocating public interests while protecting personal and company data.

In the article “Automated decision-making systems and the fight against COVID-19” the non-profit research and advocacy organisation AlgorithmWatch points to solutions that reconcile digital contact tracing with a more rights-preserving approach. AlgorithmWatch further argues that with the increased use of technology in the delivery of services automated-decision-making could “have catastrophic consequences for citizens who have no access to or no means to critically understand digital tools“.

I would add that we all need to have a better understanding of digitalization so that we can exercise our citizens’ rights and demand from economic and political actors to ensure them.

P.S. As you know by now, I like online courses. So if you wish to learn more about the workings of artificial intelligence, I would recommend the free online course Elements of AI.

Top Foto: private.

Leave a Reply